Real World Solutions for the Climate Crisis - Part 2

Part 2 picks up where Part 1 left off, initially outlining false solutions churned out by incumbents, then outlining a framework encompassing potential solutions to the greatest threat of our times.

This directly follows on from Part 1.

The False Solutions Fantasy

I already looked at false solutions in a previous article on the UK’s zero emissions. Here I’ll cover them in more depth.

Carbon Capture and Storage (CCS) is highly popular with technocratic wizards. It’s now also known as Carbon Capture Utilisation and Storage (CCUS), presumably to give the discredited process a new look.

According to the International Energy Agency (IEA), ‘Momentum is growing for CCUS’. The IEA released a ‘flagship’ report extolling how CCUS ‘would more than triple’ the capture of CO2 ‘to around 130 Mt per year.’ Apparently its the only solution that will reduce emissions and remove CO2 and is ‘a critical part of “net” zero goals.’ CCUS will form part of ‘a broad suite of technologies.’ The report builds on this fallacy as it notes (emphasis added):

Alongside electrification, hydrogen and sustainable bioenergy, CCUS will need to play a major role.

Business as usual unsubstantiated projections then ensue as the report waxes about ‘30 commercial facilities’ on the table, with various projects ‘now nearing a final investment decision’ that ‘represent an estimated potential investment of around USD 27 billion.’ The emphasis is maintaining heavy industrial output that incur huge CO2 emissions. It also notes that:

Captured CO2 is a critical part of the supply chain for synthetic fuels from CO2 and hydrogen.

And:

CCUS can support a rapid scaling up of low-carbon hydrogen production to meet current and future demand from new applications in transport, industry and buildings.

With everybody aiming for net zero by 2050, the IEA for some reason thinks it’ll be 2070 before net zero is reached. This highly speculative outline from the IEA might apply in Neverland, but they’re certainly not real world solutions. This report is a classic systemic GIGO scenario, as we’ll see.

A briefing from the Center for International Environmental Law (CEIL) outlines just how useless and unnecessary CCS or CCUS is. It doesn’t actually ‘remove’ CO2 from the atmosphere. It’s supposed to capture some of the emissions from combustion of fossil fuels. Most of the CO2 already captured is injected into oil wells to enhance oil recovery, which ‘exacerbates global warming by boosting oil production and prolonging the fossil fuel era.’ But it doesn’t seem to matter whether it works or not. There seems to be a prevailing self-delusional myth that such processes will maintain profitability. As CEIL notes:

Existing CCS facilities capture less than 1 percent of global carbon emissions. The 28 CCS facilities currently operating globally have a capacity to capture only 0.1 percent of fossil fuel emissions, or 37 megatons of CO2 annually. Of that capacity, just 19 percent, or 7 megatons, is being captured for actual geological sequestration.

Using the example of a major facility in the US. CEIL notes:

During its operation, the CCS system only captured 7 percent of the power plant’s total CO2 emissions, well below the company’s promises to reduce CO2 emissions by 90 percent. The captured carbon from Petra Nova had been used for enhanced oil recovery, but the 2020 collapse in oil price and demand rendered this uneconomic.

As the inevitable transition away from fossil fuel dependency takes place over the next couple of decades, these systems will become stranded assets. They are simply not economic:

For a new-build gas-fired plant, CCS could more than double the construction costs and increase the cost of energy produced (known as levelized cost of energy) by up to 61 percent.

And its the same story for the industrial sector, where high levels of emissions occur. Only less than 8% CO2 would likely be captured. Ultimately the only way to decarbonise industry is through renewable energy sources. Another simple solution to the problem is recycling scrap metal or reusing products that might otherwise be disposed of. Ultimately the whole purpose of CCS is to protect the bottom lines of fossil fuel companies, not to mention the subsidies they will receive from Government.

Another problem with CCS is the transport of CO2 and the extensive infrastructure required to accommodate this. This would involve a network of pipes transporting high pressure, low temperature CO2. As this video illustrates, a pipeline rupture could be catastrophic:

The explosive rupture of a pipeline and its associated shockwave pose immediate physical risks to nearby people and property. In areas closest to the pipeline, a release of CO2 can quickly drop temperatures to minus

60°C, coating the surrounding area with super-cold dry ice. At high concentrations, CO2 is a toxic gas and an asphyxiant capable of causing “rapid ‘circulatory insufficiency’, coma and death.”

And there is the issue of ‘environmental racism’, whereby fossil fuel infrastructure is predominantly located in impoverished and racial minority communities.

CCS requires more fuel to function. A paper published by Harvard University indicate that the energy penalty from implementing CCS could be as high as 40%. Once captured, there’s then no guarantee that all the CO2 can be sequestered. As I noted in the Net Zero article:

Research from the Massachusetts Institute of Technology has demonstrated that the predominate method that CO2 will be stored underground, isn’t as effective as first thought.

The study showed that CO2 coming into contact with brine deposits in underground rock formations — important for the solidification process into solid carbonates — actually causes the CO2 to solidify on first contact, creating a barrier to further CO2 disposition.

The next chapter in the fairy tale book of false solutions involves the miracle fuel of hydrogen. It’s a colourful story involving no less than five different shades of hydrogen; Grey, Brown, Black, Green and blue. What’s the difference between them? A detailed paper from Cornell university, How green is blue hydrogen? explains.

Most hydrogen (96%) is processed from fossil fuels in a process called steam methane reforming (SMR) of natural gas:

heat and pressure are used to convert the methane in natural gas to hydrogen and carbon dioxide. The hydrogen so produced is often referred

to as “gray hydrogen,” to contrast it with the “brown hydrogen” made from coal gasification.

Blue hydrogen is grey hydrogen with CCS plugged into it. Its basically all the shades of grey hydrogen passed through the prism of CCS to produce a sparkling blue version that is being touted as the big solution to the climate blues. Touted as low or zero carbon, it seems the sky’s the limit for blue hydrogen. The IEA has been quick to pull out the bells and whistles. As the headline proclaims, The clean hydrogen future has already begun. The story begins with:

There is a growing international consensus that clean hydrogen will play a key role in the world’s transition to a sustainable energy future.

The main emphasis in the article is the cost of the various types of hydrogen. It does however make positive notes concerning Green hydrogen, which is the cleanest version that is produced from water hydrolysis, if renewable energy is used. It could play a role in a future energy mix. The article though does rely on a lot of assumptions.

Given that grey hydrogen is the dominant form, with some brown and black, which is derived from coal (brown comes from lignite high coal. Black comes from black coal), this is where almost all of the blue hydrogen will come from. And it does have a footprint. Even if some of the CO2 is captured, there is also a methane footprint. It requires a lot of energy under high pressures to produce.

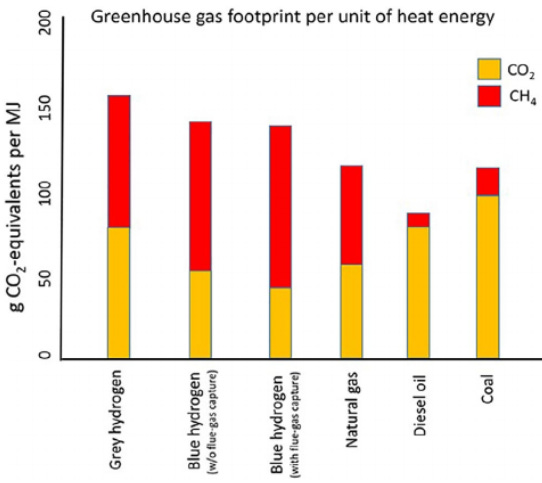

Fugitive emissions plays a significant part in the methane footprint, accounting for up to 3.5% of the gas used in production. Taking everything into account, the overall footprint of both grey and blue hydrogen is higher than any other fossil fuel, as this table from the Cornell paper shows:

The notion that blue hydrogen is a clean, low emission fuel is therefore misleading. That leaves green hydrogen then as the only alternative. But just like blue hydrogen, the scale for production isn’t available yet. But guess where all the investment is going to go? Blue hydrogen. As the report notes:

Society needs to move away from all fossil fuels as quickly as possible, and the truly green hydrogen produced by electrolysis driven by renewable electricity can play a role. Blue hydrogen, though, provides no benefit. We suggest that blue hydrogen is best viewed as a distraction, something than (sic) may delay needed action to truly decarbonize the global energy economy, in the same way that has been described for shale gas as a bridge fuel and for carbon capture and storage in general. We further note that much of the push for using hydrogen for energy since 2017 has come from the Hydrogen Council, a group established by the oil and gas industry specifically to promote hydrogen, with a major emphasis on blue hydrogen.

But is green hydrogen a panacea? This is what I outlined in the net zero article:

A paper from the Proceedings of the IEEE, highlights shortcomings of using hydrogen as a fuel. Does a Hydrogen Economy Make Sense? poses a question that, according to author Ulf Bossel, is a resounding no.

The key point is that:

the energy problem cannot be solved in a sustainable way by introducing hydrogen as an energy carrier. Instead, energy from renewable sources and high energy efficiency between source and service will become the key points of a sustainable solution.

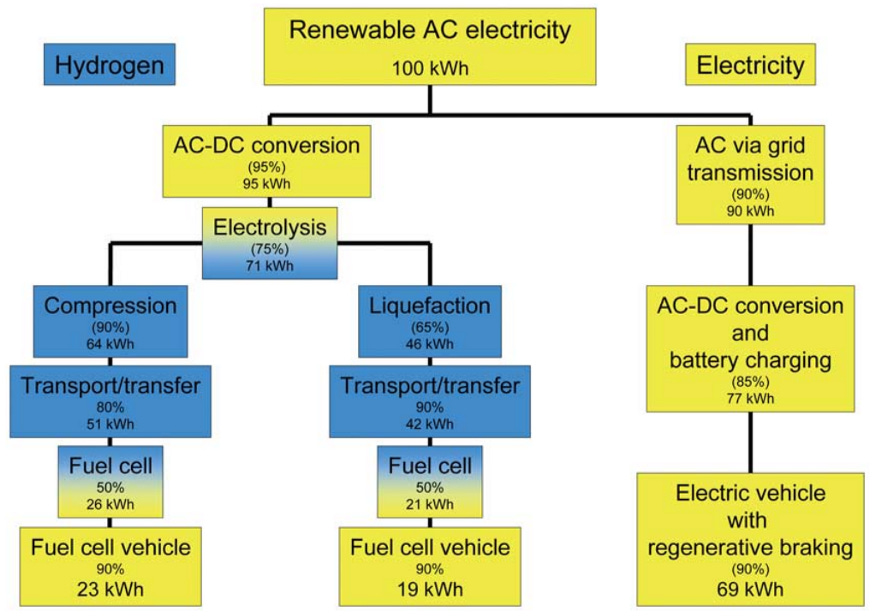

Bossel presents clear analogies using figures that demonstrates the differences in energy efficiency between hydrogen fuel and direct electrical use. The infrastructure involved in mass producing hydrogen would be immense, with a network of power plants purpose built for producing hydrogen.

High energy input is required to produce hydrogen. Then there is the stages in between, involving cooling, storage, transport and finding space to store at point of use. All these stages require high energy use.

The following infographic shows the energy loses incurred between a vehicle using hydrogen fuel cells and a electric vehicle powered directly from the grid.

To conclude:

Fundamental laws of physics expose the weakness of a hydrogen economy. Hydrogen, the artificial energy carrier, can never compete with its own energy source, electricity, in a sustainable future.

Bossel's argument is scientifically sound. However considering the bigger picture and potential problems surrounding renewable energy storage, hydrogen may have a role to play in a wider energy mix.

And that’s the crux. Green hydrogen also has limitations, but excess renewable energy can be used to produce hydrogen, using it as a relative storage medium.

Bioenergy is a prevailing con when it comes to ‘clean’ energy. In 2017, this paper from Manchester University’s Tyndall Centre, Generating Low-Carbon Heat from Biomass: Life Cycle Assessment of Bioenergy Scenarios was published. The paper states:

the UK is legally bound by the 2008 Climate Change Act […] to achieve a mandatory 80% cut in the UK's carbon emissions below 1990 levels by 2050, and a benchmark target to reduce carbon emissions by

35% below 1990 levels by 2020 […]. In the context of these targets, the UK's Renewable Energy Roadmap […] confirms the high likelihood that bioenergy systems will contribute an increasingly important role in the UK achieving its climate change, emission reduction and renewable energy contribution targets.

The paper reports on a series of Life Cycle Assessment pathways that have been considered relative to greenhouse gas (GHG) emissions of those pathways, comparing with counterfactuals, i.e. ‘what would have happened to the land/resource if not used for bioenergy.’

It should be noted here that this research was commissioned by the UK Department for Business, Energy & Industrial Strategy (BEIS) on behalf of the UK Government. This comes in the light of a major push towards bioenergy as a renewable and clean resource. The criteria on which the research is based on is explained:

The UK Department of Energy & Climate Change (DECC) developed the ‘Bioenergy Emissions and Counterfactual’ (BEAC) model to provide a scientific tool for investigating the GHG impact of different biomass supply chains, and to evaluate the resulting GHG intensity of generated bioenergy.

The paper makes reference to a report issued by BEIS in 2014, which analyses the potential of biomass resources from North America. It notes that:

the demands of the UK biopower sector to 2020 are projected to exceed the UK's domestic supply and increasingly become reliant on imported resources.

These are the key criteria the results are based on:

Scenarios GHG performance results are compared against the equivalent UK GHG performance value for generating heat from natural gas (261.5kg CO2eqv./MWh heat) and against the UK Government's sustainability target for heat bioenergy generation (125.3 kg CO2eqv./MWh heat).

The paper emphasises ‘developing best practice bioenergy pathways that avoid high GHG impact methods.’

The overall conclusion from the research is that there are some sources of biomass that could be viably used e.g. agricultural & food wastes, but with an emphasis on whether these can’t be used for other purposes such as composting or natural fertiliser. As for energy crops, the results were variable depending on the type of crop used and how it compared with the counterfactuals. The paper makes clear that:

The choice of land dedicated for the production of energy crops, and the counterfactual use of that land is key in determining the potential GHG performance of energy crop bioenergy pathways. If the land would otherwise have reverted to a natural ecosystem (forest), any GHG savings made through the production and conversion of energy crops will likely be far outweighed by the carbon uptake from the atmosphere that would otherwise have occurred.

The paper also makes reference to an early EU report (2007), which concluded:

that the non-inclusion of counterfactuals when analysing the GHG impact of bioenergy pathways represents a basic but fundamental assumption error, as failure to account for the production or mitigation of GHG emissions within a counterfactual can result in a bioenergy scenario being wrongly identified as potentially saving or resulting in GHG emissions.

These points are particularly important as we shall see later. The paper concludes with recommendations for policy makers. But policy makers appear to be setting their own agenda.

In 2003, the UK’s largest power station, Drax, began the transition from coal to biomass. It fully converted its first generating unit to run on compressed wood pellets in 2013, ‘lowering the carbon footprint of the electricity it produced by more than 80% across the renewable fuel’s lifecycle,’ the company claimed. However a study commissioned by the Natural Resources Defense Council (NRDC) challenges the claims made by Drax. Underpinning the Drax process and UK Government policy is bioenergy with carbon capture and storage (BECCS). This is based on the idea that:

carbon capture and storage (CCS) technology to a biopower plant will create a ‘carbon negative’ power station (i.e., resulting in a net removal of CO2 from the atmosphere). ‘Carbon negative’ power generation would help to offset emissions from hard-to-decarbonize sectors and deliver the Government’s commitment to zeroing out net economy-wide emissions by midcentury.

…Scientists are clear that this simplistic picture of bioenergy and BECCS is flawed. In particular, biopower generated from forest biomass without carbon capture is rarely carbon neutral.

This is what the Tyndall study indicated, as most of the biomass used at Drax is sourced from forests in the southern US, processed into pellets and imported by Drax. Then there is the ‘additional energy intensive processes

required to produce pellets’, noted by the Tyndall paper. Add to that, transport emissions.

There are other related impacts noted in the NDRC report:

much of the wood burned for electricity in the UK is cut down and shipped in from ecologically sensitive forests overseas, destroying habitats and endangering wildlife. And unlike solar and wind, large-scale wood-burning for power emits dangerous air pollution that causes an array of health harms. Wood pellet mills likewise release unsafe air pollution, at times at levels that violate plant permits and U.S. law and are overwhelmingly cited in low-income communities and communities of color. Drax has been cited multiple times for serious air quality breaches at its U.S. pellet mills, most recently in February.

Meanwhile the taxpayer is subsidising Drax to the tune of £1billion/year, with an estimated £31.7 billion in new subsidies to push BECCS at the plant. This is at odds with the Tyndall study, which was of course commissioned by the Government itself. Its comments for policy makers state:

policies and sophisticated bioenergy GHG impact assessment methodologies should be developed that evaluate the whole life cycle and specific steps of biomass resources and bioenergy pathways. Policies should promote and incentivise the: use of specific biomass resources that will result in the mitigation of high GHG impact activities; and use of bioenergy pre-treatment and conversion technologies that will deliver low carbon bioenergy.

Also noted:

policy focus and incentive mechanisms should be developed that take into consideration the synergies and consequences of different bioenergy pathways, moving away from the focus of bioenergy ‘maximise renewable generation’ rather than to simply ‘reduce GHG emissions’.

Evidently the Government hasn’t been following its own evidence base. But the UK has been influenced by the EU. A bioenergy driven Drax would not have got off the ground if it wasn’t for EU policy and subsidies. A report by the The Transnational Institute (TNI), Bioenergy in the EU, outlines how EU renewables policy developed, with bioenergy at its heart.

As part of the EU’s renewable energy framework, the Renewable Energy Directive (RED) was introduced in 2009:

The RED thus put in place mandatory targets of the kind requested by the industries which stood to benefit from support for bioenergy:

• An overall goal of generating 20% of energy from renewable resources by 2020 (the relative ease and cheapness of upgrading ageing coal-fired power plants to burn biomass quickly made bioenergy the technology of choice in this sector)

• A binding target of 10% of energy consumed in the transport sector to come from renewable sources by 2020 (with up to 7% allowed from first-generation agrofuels based on food crops).

This opened the door for the UK Government and Drax to begin conversion to bioenergy under the guise of fulfilling mandatory renewable energy targets (see below).

Bioenergy also benefits from a curious carbon accounting loophole. The IPCC has stipulated that direct emissions from bioenergy should be reported as zero, despite bioenergy not being considered as carbon neutral. This is due to land use changes and GHG emissions (as covered by the Tyndall study). As the report notes:

the result of this IPCC guidance is that a large proportion of the emissions caused when biomass is burned for energy generation are not accounted for at all. This omission is apparently justified in the name of avoiding possible double-counting, with under-reporting of emissions considered preferable to over-reporting. This loophole in the IPCC methodology is exploited to the full by EU Member States and energy utilities, who are converting coal-fired power plants into massive biomass burners, causing equally massive emissions that go unreported.

The report also refers to the REDD+ scheme (Reducing Emissions from Deforestation and Forest Degradation), set up by the UNFCCC. It in effect allows countries to offset bioenergy emissions by funding reforestation or

forest conservation projects. It has though been criticised as a get-out-clause, allowing countries to pay:

for supposed additional forest protection or reforestation in the global South. Critics also argue that REDD+ falsely blames peasant agriculture and shifting cultivation for forest loss, restricts local communities’ access to land, and destroys their livelihoods while failing to tackle the underlying causes of deforestation: industrial agriculture and logging, mining, and large-scale infrastructure development.

The report concludes:

Current EU targets for renewable energy use are largely met through bioenergy. Aggressive incentives through subsidy schemes, trade, and legislation aimed at promoting bioenergy have had a devastating impact on land use, livelihoods, food security, climate, biodiversity and water, particularly in countries in the global South that export biomass to the EU for energy production. Bioenergy has by far the largest land footprint of all renewable energy forms, and large-scale consumption will lead to increased greenhouse gas emissions at a time when immediate and steep emission reductions are urgently needed.

In 2016, the EU approved funding support for Drax, to facilitate its conversion of a generating unit to biomass. Prior to that in 2014, the EU had greenlighted the construction of its carbon capture and storage (CCS) project, costing £238 million. With the UK now out of the EU, the UK taxpayer will likely pick up the tab for any future funding. But serious questions are being asked about the exaggerated and misleading claims over bioenergy.

In January 2020, environmental lawyers ClientEarth announced they were taking the UK government to court. ClientEarth’s concerns over Drax began in 2017, when the Government announced an estimated £1.3bn in subsidies to convert Drax’s third unit of six from coal to biomass, covered by the EU as noted above. As part of the deal:

“Drax will receive a guaranteed payment of £100 per MW/hour – which is roughly double the current market price for electricity. This is even more per MW/hour than is planned for the heavily criticised new nuclear plant at Hinkley Point. The Commission has not specified a cap on the subsidies Drax will receive for this project over the coming years."

ClientEarth’s Susan Shaw also stated:

“Big environmental question marks continue to loom over biomass and whether it is in fact renewable on this scale. Biomass has been classified indiscriminately as a ‘zero carbon’ energy source but this stems from flaws in the way the EU and US account for carbon. Neither the UK nor EU intend to address these questions until 2020 at the earliest.

“With these subsidies, the government is paying for damaging infrastructural lock-in that will last for many years to come."

What triggered the legal action was Drax’s decision to convert its remaining units over to gas, instead of phasing them out as part of the transition away from coal, which went contrary to the UK’s own climate commitments. As a result, the Planning Inspectorate ruled that ‘the project’s climate impacts outweighed any benefits’ and recommended blocking the project. But the Government ignored the recommendation and approved the project anyway.

In February 2021, Drax abandoned its plans to convert the remaining units to gas. This was despite a recent High Court ruling that upheld the Governments decision ‘that the Government can refuse major projects on climate change grounds’. However the controversy over bioenergy hasn’t gone away. And just to sum up the whole charade over bioenergy (emphasis added):

A US facility owned by Drax in Mississippi was recently fined $2.5m for breaching air pollution rules. The local Department for Environmental Quality took action after the plant, which produces wood pellets to supply the Selby power station, was emitting illegal levels of volatile organic compounds – an extremely harmful gas.

The energy firm, which receives hundreds of millions of pounds a year in UK subsidies and tax breaks for its biomass, has also faced criticism over its purchase of a wood pellet plant in Canada that currently burns natural gas to dry wood fibres used in biomass production.

There are other false solutions, some rather bizarre, that won’t be covered here. The focus has been on high profile issues that are likely to have major impacts. The next section considers the ‘real world’ solutions.

Real World Solutions

A return to the Anthropology and Climate Change report sparks off the discussion on how to tackle the climate crisis. Three concepts underpin the discourse. These are; adaptation, vulnerability, and resilience. Mitigation is usually included, although that could be regarded as a form of adaptation.

Adaptation has two broad contexts: social, that falls within the realms of human experience and structure, and ecological, which defines how species adapt and survive in certain environmental conditions. The report uses the term “adaptive capacity”, to refer to:

the ability of social institutions (households, communities, organizations, networks) to use knowledge and experience to foster flexibility in problem solving and to enable reconfigurations without losing functionality.

This is dependent on how humans perceive, understand and react to problems and how inclusive or exclusive the problem solving discourse is, within social structures. Climate change is a novel problem of which ‘there is no ready culturally integrated institutionalized response.’ Quite often this will involve implementing solutions that cope with a problem but fails to implement a meaningful adaptation strategy, e.g. applying a technological solution to a problem that may offer short term respite but is not a long term solution.

The UN Framework Convention on Climate Change (UNFCCC) defines adaptation as:

adjustments in ecological, social, or economic systems in response to actual or expected climatic stimuli and their effects or impacts. It refers to changes in processes, practices, and structures to moderate potential damages or to benefit from opportunities associated with climate change. In simple terms, countries and communities need to develop adaptation solution and implement action to respond to the impacts of climate change that are already happening, as well as prepare for future impacts.

Adaptation covers a broad church and these are laid out by the UNFCCC and the Intergovernmental Panel on Climate Change (IPCC) reports. However this isn’t really happening effectively on a global scale and there are concerns that:

the focus in national adaptation plans is mostly on technical and infrastructural interventions with little, if any, attention to social and institutional issues.

The issue here is the long term nature of climate change. Political and social systems focus on short term problems. Attempting to integrate and interconnect different systems and processes can be difficult and problematic. Planning for climate change therefore becomes an immense challenge at temporal and spatial levels.

Vulnerability is essentially the ability of people endure specific environmental hazards. Everything has some degree of vulnerability. With respect to climate change:

the concept of vulnerability in anthropological research explicitly ties environmental hazards and specifically climate change, and its effects to the structure and organization of society.

This is closely linked to the concept of resilience. Resilience was initially an ecological concept that denoted the ability of natural systems ‘to respond to disturbance by resisting damage and recovering quickly.’ This attribute has found its way into social systems. From a systems perspective this has produced the term, social-ecological system (SES), combining natural science and social science variables. This revolves around the ‘ability of groups to tolerate and respond to environmental and socioeconomic constraints through adaptive strategies.’ However the lengthy time frames associated with climate change may prove difficult to incorporate into an effective SES framework. As such:

Robust—resilient—local and regional management requires a comprehensive grasp of the social, historical, cultural, and political aspects of people in their environments.

How resilience is applied is subject to local and national dynamics, including politics and power and whether it can be incorporated within adaptation strategies. Developing coherent policies with respect to this will be a complex affair.

The three concepts are invariably interconnected. How they are applied to the threat of climate change is dialectical in nature. As such there may be inherent contradictions e.g. resilience may not necessarily equate to lack of vulnerability. In other words, building an SES framework will be a pragmatic process as well as theoretical. As the report notes:

As currently practiced, much climate change adaptation today does not

address the real adaptive challenge which requires questioning the beliefs, values, commitments, loyalties and interests that have created and perpetuated the structures, systems, and behaviors that drive climate change. Indeed, current definitions of climate change adaptation are positioned far more to accommodate change rather than to challenge the

causes and drivers, leaving current development approaches essentially unchallenged.

This then reflects on the current structures that revolve around the UN based climate action paradigm. These structures are culturally exclusive in the sense that they do not accommodate other social entities, and are dominated by elitist values. Given what’s been discussed already within this and previous articles, the current set-up would, ipso facto, be incapable of producing an effective SES framework that would shift towards a workable solution to the climate crisis. This is where what the report describes as ‘community-centered approaches’ to climate change comes in.

Peoples perceptions on climate change will be shaped by personal and cultural predilections. This will depend on some degree to exposure. Climate impacts will happen at a local level and will impact communities directly. This is already happening and this is where issues dealing with the threat will have greater relevance.

Climate impacts are location specific, e.g. the urban heat island effect or sea level rise impacting low laying islands in the south pacific. But it can also follow certain pathways e.g. poor and rich communities may live in the same area, but the impacts will be different, with poor communities more vulnerable. And, as the report observes, women are most likely to be affected by changing climate, especially in developing countries. Race is another issue. All in these lead to uneven impacts on certain communities. This brings in environmental justice, ‘which provides the foundation for climate justice efforts.’ As such:

Climate justice addresses the inequity those least responsible for greenhouse emissions are often the most impacted by climate change’s adverse effects.

Working with communities can help to establish strategies for dealing with the impacts of climate change. This entails making connections with various links that can be global or local and identifying specific impacts. Every community will have unique connections as well as common links. Decisions should come from the community:

Facilitating community decision-making and negotiation reduces negative outcomes for communities and gives the community ownership of their future.

Community engagement is therefore vital and should be conducted in a spirit of mutual understanding, respect and sharing of ideas. Anthropology appears to be an ideal discipline for engaging with this area. As an example:

“Dialoguing Local and Scientific Knowledge in Northeast Siberia”‘ shows how one such collaboration between an anthropologist and a permafrost specialist resulted in increased understanding about the local effects of climate change for the local affected communities, the scientists (social and natural), local, regional and republic organization and policy-makers.

Scientific expertise and local indigenous knowledge can go a long way towards achieving manageable solutions, through an ‘inter-epistemological approach’. This extends to working with other areas of expertise in other disciplines, expanding interdisciplinary cooperation, in diverse areas such as the arts, psychology, social sciences and economics.

The report makes reference to complexity science, which is the study of complex adaptive systems. This is related to systems theory, but expands the dynamics of systems theory to account for wider potential influences and interactions. It taps into the notion of the ‘butterfly effect’. This will be covered in more detail later.

In a religious context, it has been observed that some cultures ‘consider most of the natural world to be sentient, embodied by spirits who are active agents’. This means accommodating local perceptions of fluctuating conditions, taking account of belief systems and how science can interact effectively. It brings in the concept of ontology to differentiate between western science and the spiritual and cultural perceptions of other groups.

Disruption of the system is another potential solution. In 1942, Joseph Schumpeter published his work Capitalism, Socialism and Democracy, in which he coined the term ‘creative destruction’. In a paper published in the Journal of Evolutionary Economics, William Kingston (Trinity College) discusses Schumpeter and the end of Western Capitalism. He highlights Schumpeters’ comment that, ‘capitalism would eventually produce an

‘atmosphere of almost universal hostility to its own social order’’ and that the end result would be an imposition of socialism, due to capitalism’s ‘tendency towards self-destruction’. There’s a prevailing argument that that is what is actually happening today.

Yet thirty years ago, Schumpeter disappeared into the wilderness following the collapse of the Soviet Union. The ending of the Cold War was seen as a vindication of the capitalist system. Fast forward to the 2008 financial crash and it’s an entirely different story. It’s this event that underpins Schumpeter’s ideas.

The financial system is the life blood of global neoliberalism. This has previously been covered in some detail.

A key observation from Schumpeter was that the financial system was geared up to ‘create money out of nothing’. A major part of this transition was the movement of the system from unlimited liability to limited liability. This was effectively how the industrial revolution’s expansion was enabled, through the creation of money. Then again, there’s Adam Smith’s ‘invisible hand’, which can only grasp whatever the law says it can grasp. Take away the law and it grasps whatever it wants:

As of Smith’s theory, ‘the working of self-interest is generally beneficent, not because of any natural coincidence between the self interest of each and the good of all, but because human institutions are arranged in directions in which it will be so’ , (emphasis added). As already stressed, the relevant institutions are individual property rights, whose unique value is that they can civilize self-interest by forcing it to serve the public good also. They can, but they need not.

With the introduction of credit, bubbles begin to develop. Schumpeter viewed the process in terms of cycles, what we would call ‘boom/bust’ cycles. Technological cycles linked into this process. This is what happened in the 1920’s - a period of innovation and expansion of financial markets. Then came the 1929 crash. The same thing happened in the early 2000’s, followed by the recent crash in 2008. The paper notes:

Lack of effective discipline on bankers is the primary reason why the great financial crash of 1929 originated in the United States. The stock exchange meltdown in that year owed everything to the ease with which buyers of stocks and shares had been enabled to operate ‘on margin’ through bank loans. These were made so freely available during the 1920s that when the market collapsed, thousands of banks failed.

Sound familiar? The Glass-Steagall Act came into force in 1933 in order to curb the excesses that led to the crash. But guess what? Through neoliberal expansion:

Glass-Steagall was repealed, partially in 1980 and completely in 1999, permitting a new wave of risky investment involving the creation of money from nothing on an enormous scale.

History repeated itself as Schumpeter had largely predicted. This is where his concept of ‘creative destruction’ comes in. With the wholesale changes in the way the financial sector does business, with the focus on making money out of money, or out of nothing - typical of today’s stock market - there is no innovation, no new ideas to invest in. Outwith the financial sector there is the classic example of the demise of Kodak, as digital photography took over from film. But ‘too big to fail’ seems to have frozen creative destruction - for now.

The big problem is lobbying. That’s what has resulted in State capture and political capture. Governments no longer serve the people, they serve corporate power through lobbying. It was lobbying that destroyed Glass-Steagall. This prompted Hyman Minsky ‘to formulate his Financial Instability

Hypothesis, published in 1982,’ which postulates cycles of economic growth, beginning with initial investment that boosts the economy. This then generates financial optimism, leading to more risky investments. This reaches a stage when more investments come from banks that ‘create money from nothing.’:

Minsky calls this the ‘euphoric’ phase of an economic cycle, during which interest may be able to be paid, but there is no ability to repay capital. Finally, in what he designated the ‘Ponzi’ phase after a notorious swindler of the public, money is lent and borrowed almost completely in anticipation of increasing asset values. When eventually these fail to materialise, the cycle ends in a sudden and massive downturn into recession or depression.

That’s exactly what happened in the 1920’s and 2000’s. Adam Smith believed that altruism could drive the free market. But:

Our limited store of altruism can even stretch to acceptance of whatever rules there are as long as they appear to be roughly in the public interest. In contrast, the capture of politicians and the laws they make, by private interests, destroys whatever altruistic bonds may hold a society together. Schumpeter was indeed right to hold that ‘the State is only a separate, distinguishable social entity’ to the extent that ‘it opposes individual egoism as a representative of a common purpose.’

The paper quotes Judge Richard Posner (emphasis in original):

The adjustments that will be needed, if the economy does not outgrow an

increasing burden of debt, to maintain our economic position in the world,

may be especially painful and difficult because of features in the American

political scene that suggest that the country may be becoming in important respects ungovernable. The perfection of interest-group politics seems to have brought about a situation in which, to exaggerate just a bit, taxes can’t be increased, spending programs can’t be cut, and new spending is irresistible ...these tendencies are bipartisan.

The ultimate result of all this is gross inequality. Since the repeal of Glass-Steagall, most of the wealth generated ‘went to the richest tenth of that

one per cent’. Within the context of bailing out of the banks in 2008, it would appear Schumpeter was correct in assessing that capitalism would be replaced by socialism - at least a particular brand of well disguised socialism.

To sum up, creative destruction is a process driven by entrepreneurs who set up a new innovative businesses that displaces incumbent businesses. The example of Kodak was used above. There are other examples. How does this feed into the idea of disruption - or disruptive innovation as it tends to be known as? A 2020 paper published in the journal Technological Forecasting & Social Change, Abundance – A new window on how disruptive innovation occurs, looks at disruptive innovation through the lens of abundance and scarcity.

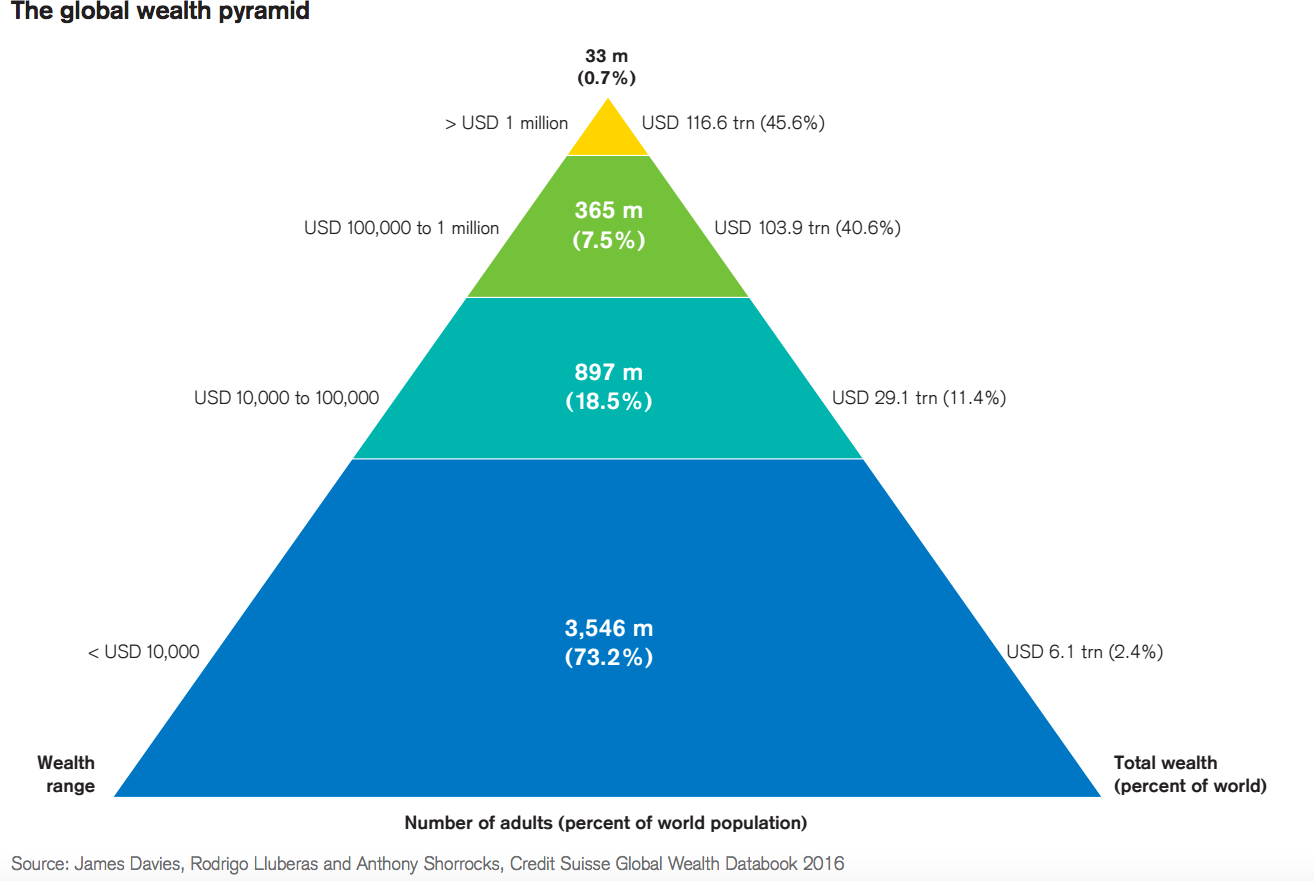

It highlights the unequal distribution of wealth in the world, making reference to an Oxfam report, which noted that ‘the eight richest people in the world own more wealth than half of the world.’ The concept of a wealth pyramid is often used to illustrate how the bottom tier props up everything above.

However around 700 million people live in poverty and around two million people die each year from preventable illnesses. There are other mortality issues affecting millions, predominantly in African and Asian countries. This is due to scarcity problems such as lack of food and health care. But rich countries have the opposite problem, namely abundance or excess. This is reflected in the over production of food that leads to excessive waste and over consumption, generating a market imbalance to the extent that:

For example, in 2014 US government ordered Cherry farmers in Michigan to destroy 30 million pounds of cherries to regulate the cherry crop as per USDA guidelines.

Such market distortions are common place, creating what is effectively market failure. As the paper points out:

Waste negatively effects the environment and aggravates scarcity in other ports of the world. Thus, resource abundance can be seen as a curse rather than a blessing.

National resources don’t always determine economic balance. Resource rich countries often experience high rates of inequality whereas some resource poor countries e.g. Japan tend to be wealthy overall. Innovation is the determining factor argues the paper.

Innovation itself comes from a variety of sources, from individuals to teams to established firms. Most tend to come from new startups with fresh ideas. Innovation has been predominately associated with technology, but is now increasingly applied to other areas. The ‘disruptive’ aspect was meant to differentiate from the technological, although even ‘hi-tech’ could also be regarded as disruptive.

Disruptive innovation serves as the key to alleviating scarcity at the bottom of the pyramid, based on the theory of social capital. Social capital is a measure of individual or group networks and contacts. People in positions of power and wealth tend to have more social capital. In other words they are well connected, “It's not what you know, it's who you know”. According to the paper, this can be a double edged sword:

a higher level of social capital in some communities creates caste inequality, ethnic exclusion, and gender discrimination. Specifically in the context of innovation, social capital at an extremely higher level can lead to redundant information that may result in generation of excess noise or group think that can inhibit germination of innovation.

But in general, social capital can be effective:

innovation is a knowledge based activity and a function of knowledge resident in individuals, group, or firms. And social capital allows innovators to utilize their networks for knowledge search and acquisition at a significantly lower transcation [sic] cost. Even for regional and societal level factors for innovation, social capital is considered a key ingredient.

Social capital can be an important determinant in the buoyancy of certain economic markets. But in abundant markets, there is a tendency to conform to the status quo. If everything is nice and rosy, why change? Of course not everyone is likely to share the abundance, some see the need for change. But that will very much depend on how social capital prevails and manifests itself within society. The paper then makes some important conclusions:

Our model may allow philanthropists/social entrepreneurs and other support organizations to understand why certain parts of the world, despite increasing supply through means external to the community (e.g. money or food), have been unable to solve the scarcity problem. These well-intentioned individuals and organizations need to examine ingrained social capital of such communities to devise solutions that can be supported.

And:

Companies that want to focus on innovation and specifically disruptive innovation should encourage non-conforming behavior in their employees and utilize various means to encourage creativity and individualism.

This is a role that social enterprises and co-operatives, as well as civil society, can undertake. With the encroaching climate crisis, this could represent a vital component in changing the status quo and deconstructing the system. How would this play out? A starting point could be a paper published by the Tyndall Centre that considers Disruptive low-carbon innovations.

Initially using the example of how the micro-computer (PC) eventually disrupted the computing industry, from the initially slow and cumbersome models in the late 1970’s to today’s ubiquitous models, the paper looks at the renewable equivalent of the IT revolution; the point here being that initial low carbon technologies were initially inefficient but have since improved. But the disruptive component here is allowing energy users to generate power for self use and distribution to the grid. There is a caveat here. Its called the rebound effect. This is when a person or entity engages in a cost saving means of conserving energy e.g. home insulation, but spends those savings on holiday flights. What is required isn’t just a change in infrastructure but also in behaviour.

The paper uses Telsa as an example and argues that electric vehicles (EVs) aren’t necessarily disruptive. The original Model S was high end, going for $70,000, so it appealed to wealthy customers. But the more recent Model 3 came in at $40,000, appealing to the mid-market. Through time, more affordable models will come through, which tends to happen with new innovations. But these aren’t disruptive in the strict sense as they are a direct substitute for the internal combustion engine (ICE). There are other issues linked to EVs - that’s another story. But the paper suggests that other forms of EVs could be disruptive, such as disabled EVs or more recently, electric bicycles. From a transport perspective the only realistic solution is an effective well-integrated public transport infrastructure.

The paper identifies four areas where disruption could occur. These are: mobility; buildings & cities; food; and energy supply & distribution. Mobility was addressed above. But other influences here are:

urban planning, compact cities, car-free communities, shifts from motorised modes to public transport or active modes (walking, cycling), and ‘disappearing traffic’ – the removal of road infrastructure and restoration of car-free urban environments.

For buildings & cities, the paper lists:

internet of things, net zero energy homes, and distributed PV-storage systems.

Under food:

urban and community-based growing, reduced food waste schemes, and modular hydroponic and aquaponic systems.

And energy supply & distribution:

peer-to-peer trading, vehicle-to-grid, and community or district energy

networks.

Many of these innovations won’t benefit everyone directly, but can act as a transition towards a more inclusive system, depending on status, i.e. high-tech innovations may take time to filter through to mainstream application whereas social innovation may have a more immediate impact. Ultimately as the paper concludes:

Disruptive innovation is a field of business and management scholarship interested in the transformative potential of novel goods and services for consumers. Its outcome is the dislodging of incumbent firms and interests from entrenched market positions.

That then is the challenge. And this paper poses an interesting basis for further research and debate on the issue.

Final Points

There are so many other discussion points that could have been included in this article. Saving the planet is no simple thing! But we are literally all in this together. Civil society and other parties need to come together and engage in debate. There are signs of that happening in the wake of the failed COP26 summit. But there is a large elephant stomping around the room. The blueprint for change has already been in place for at least 30 years. As I commented previously:

In 1983 the World Commission on Environment and Development (WCED) was established. It was chaired by the Prime Minister of Norway, Gro Harlem Brundtland. The objective of the WCED was to create a united international community with shared sustainability goals by identifying sustainability problems worldwide, raising awareness of them, and suggesting the implementation of solutions. The result was Our Common Future, published in 1987 also known as the Bruntland Report. It led to the Summit for Sustainable Development at Rio de Janeiro (Earth Summit) in 1992.

The UN described the Earth Summit as, ‘A new blueprint for international action on the environment’. That sums it up. 30 years have been intentionally squandered. Now we face a climate emergency and all we have are false solutions and a global circus.

Future articles will consider other issues that were omitted here. The concept of ‘net zero’ will be explored in more depth, plus a closer look at the so-called Glasgow Pact. And what really happened to that 30 years?

If you appreciate my work, please like the article and sign up for email notifications:

Spread the word and expand the debate: